Phoenix is an SQL layer over HBase delivered as a client-embedded JDBC driver targeting low latency queries over HBase data. Phoenix takes our SQL query, compiles them into a series of HBase scans, and orchestrates the running of those scans to produce regular JDBC result sets. Cool..Isn't it?

Phoenix doesn't depend on MapReduce, but that doesn't mean that it doesn't believe in the philosophy of bringing computation closer to data. It very well does that, through :

Coprocessors : To perform operations on the server-side thus minimizing client/server data transfer

Custom filters : to prune data as close to the source as possible

And the best part is that there is no adverse effect on the performance.

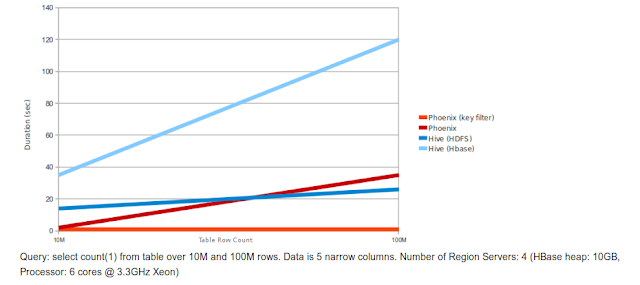

I am showing a couple a graphs below which present relative performance between Phoenix and some other related products (Courtesy : Phoenix Github page)

Phoenix vs Hive (running over HDFS and HBase)

Phoenix vs Impala (running over HBase)

The performance, as you can see from these graphs is quite good. For a detailed info you can visit this link.

Phoenix stores table metadata in an HBase table and keep it versioned, such that snapshot queries over prior versions will automatically use the correct schema. Direct use of the HBase API, along with coprocessors and custom filters, results in performance on the order of milliseconds for small queries, or seconds for tens of millions of rows.

Phoenix SQL Support

Phoenix supports all typical SQL query statement clauses, including SELECT, FROM, WHERE, GROUP BY, HAVING, ORDER BY, etc. It also supports a full set of DML commands as well as table creation and versioned incremental alterations through our DDL commands. We try to follow the SQL standards wherever possible. For a complete set of all the things which Phoenix supports you can visit the language reference page.

But, there are certain things which Phoenix doesn't support as of now. They include :

Joins : Single table only currently.

Derived tables : Nested queries along with TopN queries are coming soon.

Relational operators : Union, Intersect, Minus.

Miscellaneous built-in functions.

I don't feel it's bad considering that Phoenix has just born :)

For an in-depth info about Phoenix, you can visit Phoenix Wiki.

In the next post i'll try to write about building Phoenix with some hands-on. Stay connected till then.

No comments:

Post a Comment